While your GPU is under ML training workload, you can run these commands to check the current load of a specific GPU. The -d followed by CLOCK, POWER, PERFORMANCE, and MEMORY option allows you to display the performance of your GPUs. The -q option allows you to query various GPU state information. Processes - a list of processes running on a GPU Status - the current statusClocks - the current clock speeds Nvidia-smi command allows you to access the following information of your NVIDIA GPU: To learn more about nvidia smi command, visit. It can also be used to query and configure the devices, and to monitor their overall health and performance.

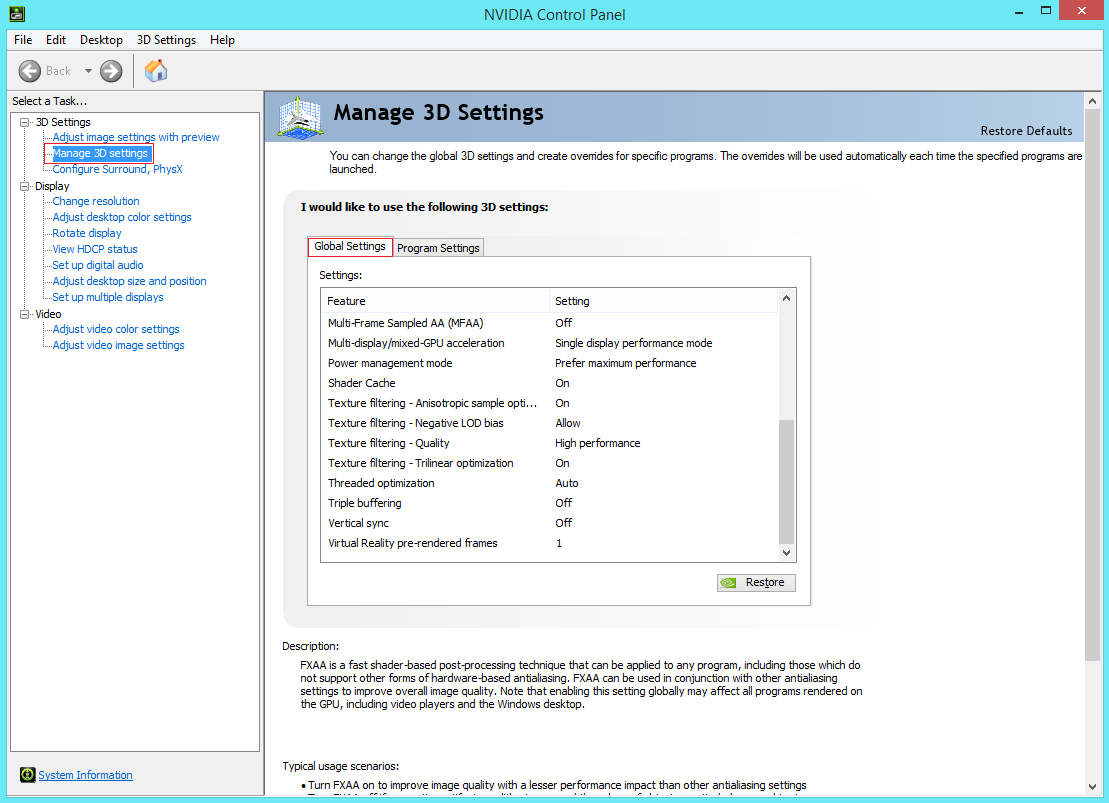

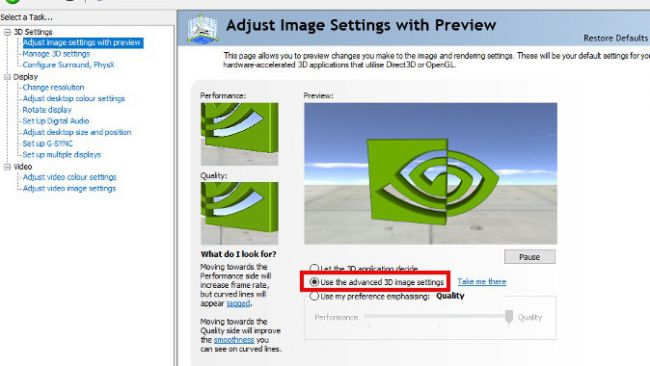

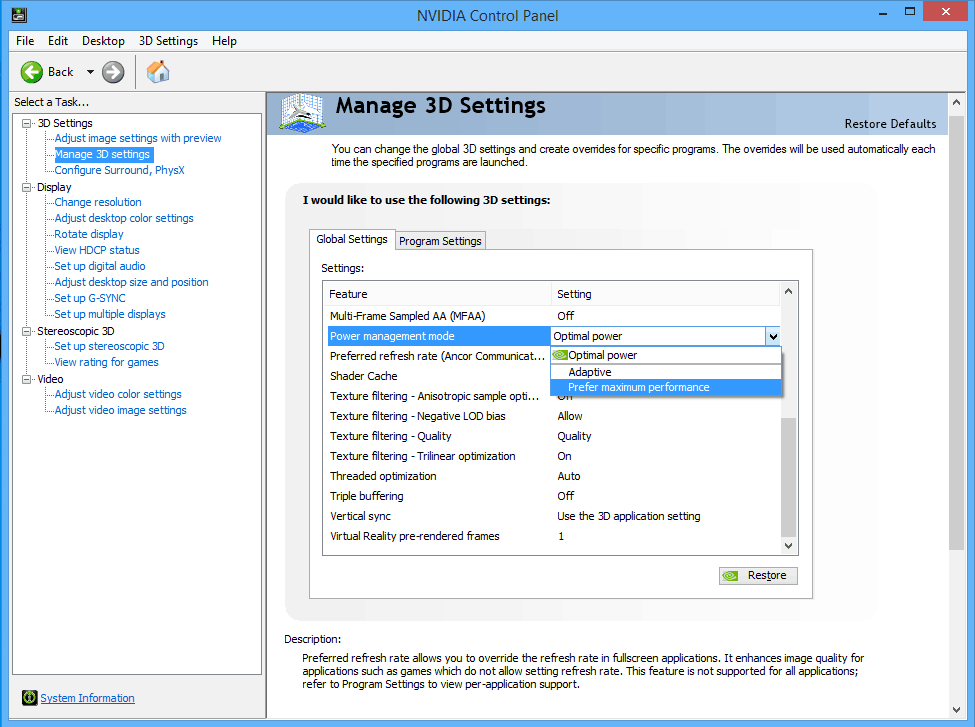

This interface can monitor and manage each of NVIDIA's Tesla, GRID, and GeForce devices from a local or a remote host. The NVIDIA System Management Interface (nvidia-smi) is a command line utility that provides management and monitoring capabilities for NVIDIA GPUs and their drivers. To check the performance state of your graphics card, we suggest using various comment-line-interfaces (CLI). To take full advantage of specialized ML hardware, it’s imperative to ensure that your graphics card is running at the optimal speed. Modern ML servers have multiple GPUs to allow for more flexibility this, however, adds more complexity to both power and heat management. To get the maximum performance out of your GPU, monitor power consumption and ensure that the GPU does not overheat. Throttling can cause the GPU to slow down or even shut off completely. Overheating causes GPU performance to degrade because the heat makes the GPU throttle itself in order to prevent damage. Overheating components: GPUs can generate a lot of heat and, therefore, are subject to overheating. In addition, voltage fluctuations are detrimental for GPUs because they can cause the GPU to overheat and potentially damage the card.Ĥ. An inadequately run power supply unit (PSU) can damage a GPU and related components. Power consumption: GPUs can consume a lot of power, which ultimately impacts performance and increases operating costs. Limited computational resources: GPUs tend to have limited computational resources, which can impact performance when training large neural networks or running complex algorithms.ģ.

Limited memory bandwidth: GPUs typically have limited memory bandwidth, which can impact performance when training large neural networks.Ģ. Some potential performance issues with GPUs can include the following:ġ. If your interest includes using NVIDA-GPUs for machine learning, please refer to our blogpost to learn about a containerized toolkit for ML and Computer Vision (CV) libraries.

Through this blog post, we would like to share some of our expertise on improving GPU performance. One of our roles involves maintaining hardware for cloud computing environments related to Machine Learning (ML) model training utilizing Nvidia-GPUs.Ī GPU is an important component for ML. (DMC) would like to share some of the ways you can diagnose and fix your Nvidia Graphics Processing Unit-related (GPU) issues on a machine learning compute server.ĭMC provides and assembles computing hardware for numerous organizations and academic institutions. In the spirit of continually contributing to the open-source community, Data Machines Corp.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed